Introduction & Problem Statement

In today’s fast-paced e-commerce world, providing relevant and personalized search results is crucial for keeping customers engaged and driving sales. Platforms like Amazon typically use advanced machine learning models trained on large amounts of historical data—such as user interactions, purchase histories, and product details—to rank and recommend products. These models perform well for common queries and popular items but can struggle when faced with diverse and changing user search behaviors.

A key drawback of traditional query generation methods is that the synthetic queries often sound too much like the product descriptions themselves.This lack of variety makes it harder for relevance classifiers to learn how to handle the wide range of real user queries, which can reduce the effectiveness of search results. Another important factor is ranking products not just based on how well they match a search query but also by their significance within a network of related products, user actions, and purchase patterns. Network-based algorithms like page rank can identify these connections and help highlight products that are more influential or relevant. Combining these graph-based techniques with machine learning models can lead to better search outcomes. A common challenge across e-commerce platforms is the cold start problem—how to deliver relevant results for new products or rare queries when there is little to no historical data available. Since traditional ranking models rely heavily on past user behavior, they often struggle in these situations. Addressing these challenges involves using adversarial reinforcement learning (RL) to generate a broader range of challenging search queries, which helps train more robust relevance classifiers. Along with a page rank -inspired approach that takes into account product network influence, this method can improve the quality of search results, especially for new or less frequently searched products. This, in turn, enhances the overall user experience and can increase conversion rates on platforms like Amazon.

Objectives

- To improve query diversity using adversarial reinforcement learning (RL)

- To Integrate PageRank for Product Influence Ranking

- To Address the Cold Start Problem in Search Ranking

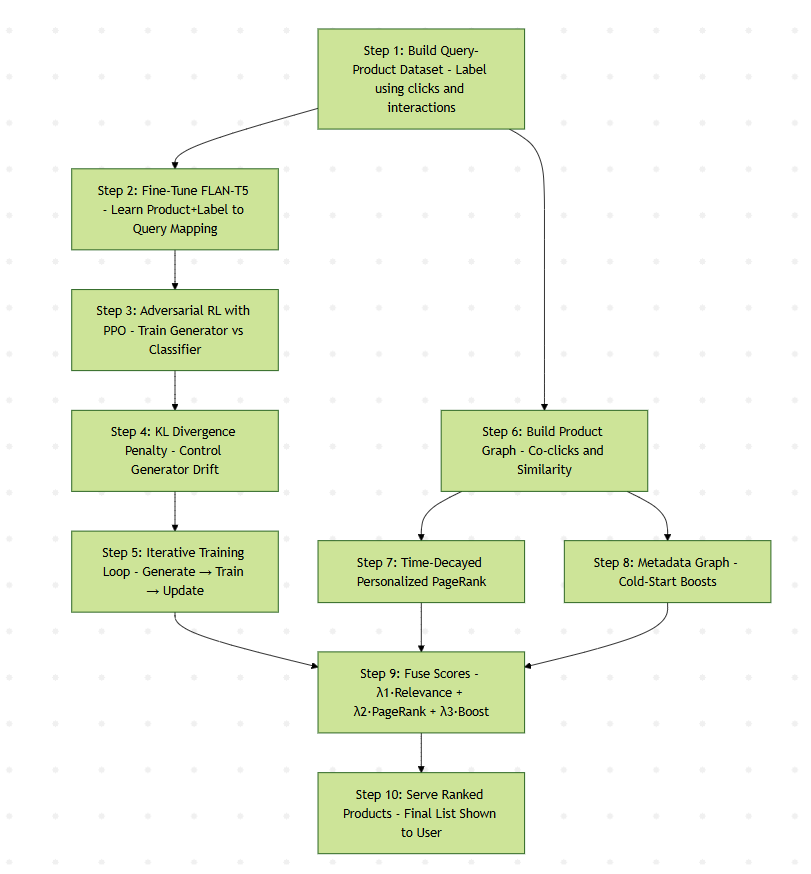

System Flow Diagram

Proposed Solution

To improve the accuracy and robustness of product search—especially in scenarios involving new or low-interaction items—this solution combines adversarial reinforcement learning for generating diverse, realistic queries with a network-based PageRank algorithm that captures product importance based on behavioral signals. By fusing query relevance, product influence, and metadata-driven cold-start support into a unified ranking strategy, the system delivers more relevant results, boosts discoverability of new products, and enhances overall search quality.

Step 1:

Build the Query–Product Interaction Dataset

Input: Historical e-commerce search logs (queries, clicked products, interactions).

Process:- Filter queries that result in ≥5% clicks on products from a target category (e.g., "pharmacy").

- For each query, collect clicked products and label them as in-category or out-of-category.

- Expand the dataset by pulling all queries that led to these products.

- Label all queries as binary (e.g., "pharmacy" or "non-pharmacy") using interaction volume.

Query: “fever reducer” → clicked product: “Dolo 650” → label: pharmacy

Query: “vitamin supplement” → clicked product: “Multivitamin Gummies” → label: pharmacy

Step 2:

Train the Query Generator (FLAN-T5)

- Fine-tune a pre-trained LLM (e.g., FLAN-T5-XL) to learn mappings of (product, label) → likely user queries.

- This trains the model to generate meaningful synthetic queries for products.

Example:

Product: “Crocin Pain Relief”

Generated Queries:

• “headache medicine” (Exact Match)

• “fever tablet” (Partial Match)

Step 3:

Improve Query Diversity via Adversarial Reinforcement Learning (Adv-RL)

- Use PPO (Proximal Policy Optimization) to optimize the generator in an adversarial loop.

- Reward is given when a generated query increases classification loss (i.e., challenges the classifier).

Adversarial Setup:

- Generator (G): produces synthetic queries.

- Classifier (C): predicts if queries belong to the product category.

- If classifier struggles, generator receives higher reward.

Product: “Dolo 650”

Generated Query: “pain-relief tablet for adults” — less direct, more challenging than “fever tablet”

Step 4:

KL Divergence Penalty for Quality Control

- Add KL divergence penalty between current generator policy and the fine-tuned model.

- Prevents the generator from drifting too far (e.g., generating nonsensical queries like “microwave cleaner” for a pharmacy product).

Step 5:

Iterative Training Loop

- Generate synthetic queries using current generator.

- Train classifier on real + synthetic data.

- Use classifier feedback to update the generator (via PPO).

- Repeat for multiple cycles (e.g., 4 rounds) to refine both models.

Step 6:

Build a Product Graph

- Construct a graph where:

- Nodes = products

- Edges = co-clicks, co-purchases, or semantic similarity

- Edge weights reflect behavioral frequency

Example:

• Headphones ↔ Bluetooth speakers (often co-clicked)

• Protein powder ↔ Vitamin C tablets (co-purchased)

Step 7:

Apply Time-Decayed Personalized PageRank

- Run page rank starting from products recently clicked by the user.

- Add time-decay factor so newer interactions matter more.

- Result: An influence score for each product based on its importance in the interaction network.

Step 8:

Boost Cold-Start Products with Metadata Graph

For products with few/no edges:

- Create proxy edges using metadata (e.g., same brand, price range, or category).

- Use cosine similarity between product vectors to assign weights.

Example:

New Product: “Organic Zinc Supplement”

Proxy Edge: Similar to “Nature’s Bounty Zinc 50mg” → cold-start

product now gets PageRank influence

Step 9:

Fuse Scores for Final Ranking

For each product p and query q, compute:

FinalScore(p/q,u)=λ1*Relevance(p/q))+(λ2*⋅PageRank(p/u))+(λ3*ColdStartBoost(p))

Where:

- λ₁: weight for query–product relevance score from classifier

- λ₂: weight for product network importance

- λ₃: weight for cold-start correction

These weights are tuned using A/B tests and validation metrics (e.g., PR-AUC, NDCG@10).

Step 10:

Serve Final Ranked Product List

- Display the top-ranked products based on the FinalScore.

- Ensures:

- High relevance to query

- Product influence from behavioral network

- Visibility for new products through metadata-based boosts

Query: “best Bluetooth headphones”

- Classifier finds highly relevant matches: “Sony WH-1000XM5”, “Bose QuietComfort 45”

- PageRank boosts “AirPods Max” based on high co-click centrality

- A new brand “Infinity Glide 4000” appears due to metadata-based cold-start edge

Ranked Results:

- Sony WH-1000XM5

- Bose QC 45

- AirPods Max

- Infinity Glide 4000

- JBL Tune 760NC

Business Impact

- Boosts discovery of cold-start products by generating meaningful synthetic queries

- Improves product ranking quality by combining query relevance and network-based importance.

- Drives engagement and conversions by surfacing both accurate and influential products.

- Reduces dependency on manual labeling through adversarial, self-supervised query generation.

Future Implications

- Incorporate user personalization into PageRank to tailor rankings.

- Use Graph Neural Networks for richer product relationships.

- Extend query generation to multiple languages for global scale.

- Incorporate downstream user feedback (e.g., purchases, dwell time) into the generator’s reward.

Conclusion

- The combined approach improves search robustness and ranking effectiveness, especially for new or underrepresented products.

- It aligns well with Amazon’s goal of offering relevant, timely, and high-impact product recommendations.